《Hello AI》系列:Jetson Nano在Jupyterlab中使用ImageNet进行图像分类识别(Python)

目录

凌顺实验室(lingshunlab.com)在本文主要分享在Jupyterlab中使用ImageNet进行图像分类识别(Python)代码

前提条件

要完整无错运行以下代码,需要:

-

1,已经在本地构建好Jetson-Inference

构建Jetson-Inference的步骤:《Hello AI》Jetson Nano构建Jetson-inference(Build from source) -

2,安装Pillow

使用以下命令进行安装:pip3 install Pillow -

3,把需要分类检测的图像,存放在与代码同一目录

完整Notebook代码

# welcome to lingshunlab.com

import sys

from jetson_inference import imageNet

from jetson_utils import videoSource, videoOutput, cudaFont, Log

from matplotlib import pyplot as pltfont = cudaFont() # 定义字体net = imageNet("googlenet", sys.argv) # 导入识别模型input = videoSource("orange_0.jpg", argv=sys.argv) # 配置需要导入检测的图片

output = videoOutput("output_img.jpg", argv=sys.argv) # 配置检测后图片的导出参数img = input.Capture() # 获取图像数据# 图像检测,返回检测结果

predictions = net.Classify(img, topK=1)predictions# 绘制检测到的类标签

for n, (classID, confidence) in enumerate(predictions):

classLabel = net.GetClassLabel(classID)

confidence *= 100.0

print(f"imagenet: {confidence:05.2f}% class #{classID} ({classLabel})")

font.OverlayText(img, text=f"{confidence:05.2f}% {classLabel}",

x=5, y=5 + n * (font.GetSize() + 5),

color=font.White, background=font.Gray40)output.Render(img) # 导出图片到目录# 显示导出图片

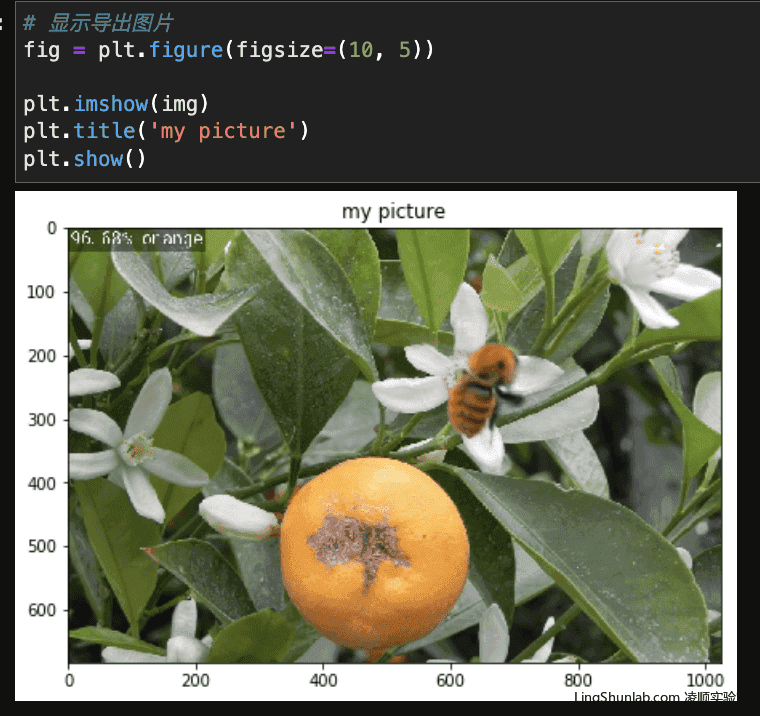

fig = plt.figure(figsize=(10, 5))

plt.imshow(img)

plt.title('my picture')

plt.show()完整Jupyterlab Notebook下载

Jetson-Inference官方python示例代码

#!/usr/bin/env python3

#

# Copyright (c) 2020, NVIDIA CORPORATION. All rights reserved.

#

# Permission is hereby granted, free of charge, to any person obtaining a

# copy of this software and associated documentation files (the "Software"),

# to deal in the Software without restriction, including without limitation

# the rights to use, copy, modify, merge, publish, distribute, sublicense,

# and/or sell copies of the Software, and to permit persons to whom the

# Software is furnished to do so, subject to the following conditions:

#

# The above copyright notice and this permission notice shall be included in

# all copies or substantial portions of the Software.

#

# THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

# IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

# FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL

# THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

# LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING

# FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER

# DEALINGS IN THE SOFTWARE.

#

import sysx

import argparse

from jetson_inference import imageNet

from jetson_utils import videoSource, videoOutput, cudaFont, Log

# parse the command line

parser = argparse.ArgumentParser(description="Classify a live camera stream using an image recognition DNN.",

formatter_class=argparse.RawTextHelpFormatter,

epilog=imageNet.Usage() + videoSource.Usage() + videoOutput.Usage() + Log.Usage())

parser.add_argument("input", type=str, default="", nargs='?', help="URI of the input stream")

parser.add_argument("output", type=str, default="", nargs='?', help="URI of the output stream")

parser.add_argument("--network", type=str, default="googlenet", help="pre-trained model to load (see below for options)")

parser.add_argument("--topK", type=int, default=1, help="show the topK number of class predictions (default: 1)")

try:

args = parser.parse_known_args()[0]

except:

print("")

parser.print_help()

sys.exit(0)

# load the recognition network

net = imageNet(args.network, sys.argv)

# note: to hard-code the paths to load a model, the following API can be used:

#

# net = imageNet(model="model/resnet18.onnx", labels="model/labels.txt",

# input_blob="input_0", output_blob="output_0")

# create video sources & outputs

input = videoSource(args.input, argv=sys.argv)

output = videoOutput(args.output, argv=sys.argv)

font = cudaFont()

# process frames until EOS or the user exits

while True:

# capture the next image

img = input.Capture()

if img is None: # timeout

continue

# classify the image and get the topK predictions

# if you only want the top class, you can simply run:

# class_id, confidence = net.Classify(img)

predictions = net.Classify(img, topK=args.topK)

# draw predicted class labels

for n, (classID, confidence) in enumerate(predictions):

classLabel = net.GetClassLabel(classID)

confidence *= 100.0

print(f"imagenet: {confidence:05.2f}% class #{classID} ({classLabel})")

font.OverlayText(img, text=f"{confidence:05.2f}% {classLabel}",

x=5, y=5 + n * (font.GetSize() + 5),

color=font.White, background=font.Gray40)

# render the image

output.Render(img)

# update the title bar

output.SetStatus("{:s} | Network {:.0f} FPS".format(net.GetNetworkName(), net.GetNetworkFPS()))

# print out performance info

net.PrintProfilerTimes()

# exit on input/output EOS

if not input.IsStreaming() or not output.IsStreaming():

break

参考:

https://github.com/dusty-nv/jetson-inference/blob/master/docs/imagenet-console-2.md